Data Visualization of Panda Go

The skills the author demoed here can be learned through taking Data Science with Machine Learning bootcamp with NYC Data Science Academy.

PandaGo: The Travel Recommendation System

By: Wenchang Qian, Roger Ren, Andrew Dodd

Introduction

We would like to start this blog post off with a quote from Dean Sivley, president of Berkshire Hathaway Travel Protection (BHTP) on the emergence of data science in the travel industry:

"The travel industry has always evolved from disruption, whether through air travel or as the Internet opened up the world to travelers. Today, data science is empowering mobile-enabled travelers through more customized shopping, booking and travel experiences. These innovations have touched every space in the travel industry, including travel insurance, to make travel more personalized, faster, and easier."

Motivation

Sivley's quote here is one of the idea seeds of our project, to make a fast, easy, and personalized travel recommendation system aimed at the consumer. There are many ways to personalize recommendations, such as with collaborative filtering, customizable search queries through natural language processing (NLP), clustering or segmentation of locations, and other methods.

Our team chose to pursue the search query route, with the hopes of exploring other methods in the future. Another factor we reasoned a consumer (often millenials) would be concerned with was price. To address this, our team also wanted to incorporate a "Best Price" feature. To summarize, our goal was to create a smart travel recommendation website that could do more than simple filtering with a range of machine learning algorithms.

Contents

- The Data

- The Framework

- The Search Algorithm

- Price Prediction

- Conclusion

1. The Data

We decided to use the Airbnb listing data as places for people to choose for their vacation. The Airbnb dataset is sourced from the Inside Airbnb, which web scrapes the Airbnb website periodically for the major cities in the world. For the scope of the project and the time limit, we only used March 2018 New York City to demonstrate the project.

The dataset contains three .csv files, the listings, the reviews and the calendar. The listing file contains approximately 48,000 rows with more than 90 features. The reviews file contains all the reviews corresponding to each listing in New York City with reviewer's Id as well. The calendar file contains the daily availability and the price for each listing from the scrape date to roughly one year later.

Scraping Expedia

In addition to the Airbnb dataset, we also scraped Expedia to create a dataset of flight data for NYC, LA, Chicago, Boston and San Francisco for the next 250 days. Though there is an Expedia API, one long term goal of this project would be to perform flight price prediction in order to help the consumer save money wherever possible. Therefore, one needs to perform more than a few API queries every time a user is on the website. A large amount of flight data is paramount in creating a useful price prediction method. The plans for the data will be further discussed in the conclusion.

In order to scrape Expedia, several methods were combined for maximal speed. A combination of multiple headers, multiple proxies, and multiprocessing were used within a Python script.

First, a set of ~25 headers were created to help randomize the origination of the script. Next, a number of proxies are scraped off of a free proxy website. Due to the free nature of the proxies used, they are often going to be overloaded, far away or have poor connection. To weed out the slow proxies, each proxy is tested by querying a random website (reddit, neopets, etc.). If the response comes back in less than two seconds, then the proxy is kept. If the response time is over 2 seconds, then the proxy is discarded for being too slow.

Multiprocessing

The third piece of the puzzle was multiprocessing. Multiprocessing allows for our script to use the proxies in a distributed manner, speeding up the scraping by a factor of 10-50x depending on the exact parameters used. In effect, if a single Python process is used then our scraper could be waiting for a single proxy to respond for a few seconds.

While this is happening, nothing else is happening. This problem is I/O bounded, meaning that the processor is waiting for the internet output response; it is not processing speed bound or CPU bound. So we have a few valid options here. There are a variety of multithreading or multiprocessing implementation options in Python. Here we have chosen to use a library called multiprocessing, which though a little CPU inefficient, is fast and effective on a laptop. Here is a snippet of code to summarize the approach used:

Findings

Note the commented lines for multithreading, it would not be too difficult to rewrite this code using multithreading. In fact, one would probably only be changing a few lines of code. This would be a good idea if one wanted to free up CPU for other tasks.

With the Airbnb and flight data, we now have enough information to build a light trip recommendation system.

2. The Framework

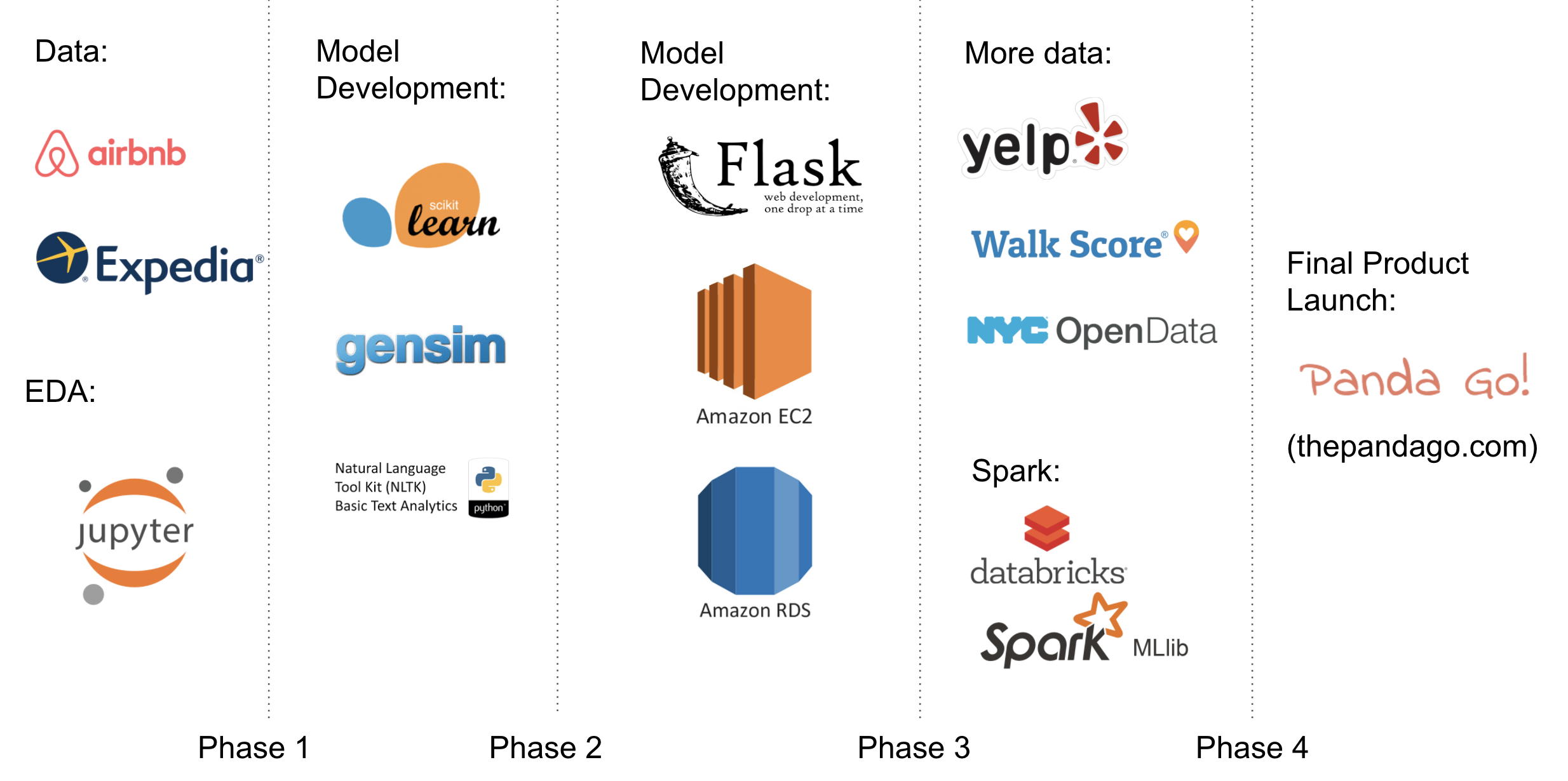

The graph below shows the roadmap of the PandaGo development cycle and the high level technical frameworks used. Agile development approach was implemented though out the capstone, which enabled fast test of new concepts and steady pace of delivering products.

Upon the publish of this blog (2018/04/01), the PandaGo went through several iterations (to the end of Phase 3): data collecting, data cleaning, model setup, database construction, and front-end Flask development (pre-apha version: thepandago.com). Further development work (Phase 4) was scheduled for the final release of the product, which include more data sources integration and transform to big-data machine learning platform (Spark MLlib powered).

Phase 1:

- Data collection and EDA: Raw data were scraped from Airbnb and Expedia as described above. Data cleaning and initial exploratory data analysis (EDA) were performed in Jupyter notebook. Key findings from the EDA were used as guidance in the model development phase.

Phase 2:

- Model development: scikit-learn was used to develop price prediction model. The main search algorithm was built based on NLP models developed using both NLTK and gensim packages.

Phase 3:

- Front-end development: the PandaGo is powered by an AWS EC2 instance. The NLP models built from Phase 2 were moved from local computers to the EC2 to support end-user search queries. Results from the price prediction model were merged into the main dataset and stored in an AWS RDS for reliable query performance.

- thepandago.com was built using Flask web framework. Our website offers the end user an smart and intuitive approach to select travel destinations. User can input their preference by clicking sample graphs, which forms a input string to the NLP model. Highest matched results will be returned to the user.

Phase 4:

- Future work: more data sources will be integrated to offer a more informed search result: Yelp for nearby commercial establishments selections, WalkScore for metric evaluation of pedestrian friendly level and NYC Open Data for safety level measurement.

- Move to big data: the database and machine learning models will be transformed to Spark and all models will be constructed to online machine learning models for continuous learning.

3. The Search Data Algorithm

The core idea behind the project is to make the travel planning process as easy and smart as possible. One of the ways we have come up with is to allow the users to type in a search query such as "New York pets allowed close to subway". The model we have chosen to use is the Doc2Vec model, which compares the vectorized input query with trained model and outputs the most similar listing based on its listing description.

Doc2Vec Model

- Preprocessing: We first tokenized the listing descriptions and filtered out the stopwords. Then we lemmatized the listing descriptions to find the root of each word. We didn't choose to use stemming because we would like the outputted listing descriptions to be more readable for validation purposes.

- Modeling: We instantiate a Doc2Vec model and train the model on all the listing descriptions. Based on an example user input string "pet allowed private room close to transit close to bar close to restaurant", we used the infer_vector function from the gensim package to vectorize the input string.

- Model Results: With the trained model, the most similar listing descriptions based on the inputted string "pet allowed private room close to transit close to bar close to restaurant" are as following.

4. Price Prediction

An important factor of most people's trip booking decisions is cost. How can I get the most out of my money? To address this question, our team decided to build a model to predict price based on Airbnb listing attributes like number of rooms, location, and amenities offered. This price would be indicative of the perceived worth of a location.

By comparing the perceived worth (predicted price) with the true price, we can see if the true price is higher or lower than the perceived worth. If the true price is higher than its perceived worth, then it is more likely that the listing is overpriced. If it is lower than its perceived worth, it is more likely to be underpriced, or a good deal for the traveler. To begin, we needed a model.

Random Forest

The model chosen was a random forest, trained on ~15,000 locations in Manhattan each with ~430 features. The first 230 features were options like number of rooms, guests, beds, the ratings, the longitude and latitude, and so forth. The last 200 features were the vector representation of the listing descriptions from the Doc2Vec model. Another wrench in the works was the cleaning fee.

In some cases, this could be as high as $60 for one night, though its average was closer to $20/night. To account for this, cleaning fee was divided by the minimum number of nights and then added to the price (e.g. price is $70, $30 cleaning fee for 2 night minimum, full price is $85).

With this data, the transformed price as the label, and a random forest, a test RMSE of .35 on the log of the price was achieved. It is often hard to imagine exactly what that means, so effectively it means an average prediction case might be when the true price is $125 and the prediction is $90. Given the variability of NYC from block to block and that the quality of the room is not well reflected in the features, we thought this was a reasonable error.

Visualize Error Distribution

The next step was to visualize the error distribution. With a plot of error distribution between prediction and true prices, our team saw that our error approximates a normal distribution with a mean of zero and a standard deviation of .35. Using this fact and knowledge of normal distributions, we find that the errors where the true price is under by the predicted price by at least 1.57 standard deviations will be the best 5% of prices from a consumer perspective. In other words, locations with this high predicted price (AKA high perceived worth) and low true price are being found.

Using this knowledge, we can add an additional feature to our dataset: "Good Price" or "Reasonable Price". The "Good Price" label would be applied to any listing in the best 5% of errors where the predicted price is significantly higher than the true price and the "Reasonable Price" label is given to the rest of the listings (not necessarily the rest of them are reasonable prices, but we have insufficient information to say that they are not). On the website, these "Good Price" listings are highlighted to the user.

5. Conclusion

In conclusion a travel website was created to assist technology-oriented users in building personalized recommendations. This was done with a combination of Doc2Vec search queries, room deal prediction, data scraping and a database for quick information retrieval. A fun interactive website built on a Flask frontend takes the user from personalization questions to room suggestions in a few clicks.

Improvements

There are too many ways to improve our website and models than we can count but to name a few:

-

City Data:

- We would add in more cities. A travel website needs more than NYC data to be a true travel website. City information could also help a user define where to go based on types of activities they like such as skiing or swimming.

-

Hotel Data:

- Similarly, we would add in other room options like data from Hotels.com, Expedia hotels, TripAdvisor, etc.

-

Price Prediction:

- Our price prediction model is not up to the quality we would like. A more accurate model would mean better "Good Price" suggestions to the user, or even broader options like "Excellent Price", "Good Price", "Fair Price", and "Bad Price". Without a better model, these options cannot be accurately decided. A great place to start on improving the model could be time effects. People generally pay more to rent an Airbnb in June or July.

-

Improved Queries:

- Currently, a user can perform queries with a long list of words. Often, the important words (like "bringing pets") are lost in the search query of 15-25 words. A smarter search algorithm may be necessary.

-

Airline Prices:

- Though we have airline price information, it is not part of our search results. To be a thorough travel website, prices are a must have. Given enough data, an additional feature might be price prediction.

-

Constantly Updating:

- Our website is currently built on a dataset, not a self-refreshing data pipeline of new data. To keep our website running strong, a scraping pipeline for room listings and flights could be implemented so our users have access to the constantly changing real world availability.

With some time investment, this website has the potential to be a truly valuable tool in helping the consumer decide where to pack their bags for next!

Thanks for reading!

Sincerely,

The Pandas

Roger, Wenchang, and Andrew