An Interpretable Prediction Model for House Prices

Background

Whether it’s a newlywed couple looking to start a family or an investor looking to diversify their portfolio as a landlord, purchasing a home is a major life event. Often, buyers need to balance items on their house ‘wish-list’ with a set budget. Similarly, housing developers want to know which features will make a property stand out, enticing buyers to choose their offeringst over their competitors’. Our team explored these topics and more using the Ames Iowa housing dataset from Kaggle.

Though this Kaggle dataset project is a competition for best housing price predictive score, our team wanted to focus on learning the application of fundamental statistical machine learning models. With that emphasis, the team was comfortable with using models whose mechanisms were easier to interpret, such as a linear regression, over more complex models. such as boosting.

We applied basic city demographic analysis and employed some creativity of our own to create a dataset used to feed into our machine learning models. They are described below along with the results and findings of the linear regression model. That is followed by a projection to explore potential improvements using more complex models.

Feature Processing & Engineering

The Kaggle-provided dataset is constructed from 79 features, of which 51 are categorical and 28 are continuous. Reviewing each feature, we identified problems such as sparse data (high percentage of zeros or missing values), numerous sub-categories, and unbalanced categories (in terms of number of values in sub-categories). A few examples of our solutions to these problems are described below and in the appendix.

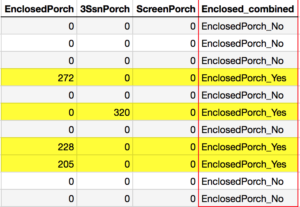

One critical problem we encountered several times was sparse data. For example, the dataset documented square footage of three different types of porches. This is problematic as over 90% of houses do not have any form of porch. We collapsed the information from three continuous features into one binary feature indicating whether or not the house has an enclosed porch. This not only reduced dimensionality but also preserved information about a relatively uncommon feature

Unbalanced categorical features is a common issue within this dataset. We found that features with multiple sub-categories often included sub-categories with less than 5% of all observations. Our general approach is to use 5% as threshold to group these sub-categories. The nature of these sub-categories were evaluated individually and combined in a logical manner. For example, the Building Type originally split townhomes into inside and end units. These were combined as a new ‘Townhouse’ sub-category. Similarly, a two family conversion home was considered to be equivalent to a duplex, and both types were classified as ‘Duplex’. Before, multiple sub-categories within the feature were less than 5%. After feature engineering, all sub-categories were greater than 5%.

We also engineered a new feature based on univariate analysis. How many houses were sold in a given month? Combining two existing features (month sold and year sold) and treating this as a date-time object, a clear seasonal pattern of number of houses sold is observed. Every summer, specifically May/June/July, the number of houses sold reach peak. This agrees with our understanding of summer being the peak season for the real estate market. Consequently, year sold and month sold were collapsed into one binary feature, peak and off-peak season.

Neighborhood was a challenging feature to engineer as it contains 25 classes. We developed a unique grouping according to their geographical location. Cquaw Creek naturally bisects Ames, with Iowa state University located in the southwest corner and residential high density buildings south east of the river. Commercial areas and floating village (zoned, undeveloped) are west of Interstate 69.This suggests that a commercial high density area to the west of Grand Avenue as compared to more residential high density to the east of Grand Avenue. We used natural divisions and the zoning feature map to reduce 25 classes (neighborhoods) into three (regions), seen below.

Fitting a Multiple Linear Regression (Model I)

Now that we have features that are in tip-top shape, we can proceed to modeling.

A multiple linear regression (MLR) is a good starting model because it is easily interpretable when compared to more complex models. However, there are some important assumptions that must be verified to determine if MLR is an appropriate choice. This includes checking for constant variance and normally distributed residuals. We verify and discuss these findings in the appendix. We tested the adequacy of r an MLR model by checking some model assumptions.

The initial residual plot revealed problems for our MLR approach, including heteroscedasticity and outliers. In the left plot, the red line fit appears to follow the zero horizontal line very closely. This means that the linearity assumption may be satisfied. However, there appear to be some outlying points towards the upper right hand corner. This may indicate non-constant variance which can potentially be addressed by log-transforming the response. The residuals versus fitted values plot with log-transformed response is presented to the right of the first plot. Note that the point cloud is now randomly scattered around the horizontal zero line.

We can now claim that the constant variance assumption is satisfied. Note that in both plots, there are two outlying observations on the bottom right. Ultimately, these two observations were removed from our model.

We performed 5-fold cross-validation on the MLR model (Model I) to estimate the train and test mean squared errors (MSEs). The results are presented in the table below. For Model I, the train and test MSEs are almost the same. This means that the model may not suffer from high variance. However, as the train MSE is not zero, the model may be biased. Ideally, the best model would predict house prices very well, and the train MSE will be zero or very close to zero. Note that the house prices were log-transformed so that the MSEs appear to be very small. The table also presents the results from Model II to be described below.

Improving the MLR model

We considered two approaches to further improve our model: subset selection (by univariate selection) and regularization.

Univariate Feature Selection (Model II)

In subset selection, we can build a model by adding features and selecting the best model. Or, we can start with Model I and exclude a subset of features until we arrive at a best model. However, with 69 dummified features subset selection will be computationally expensive because we would have to consider 2^69 models! A compromise procedure is to select the features in a univariate fashion. This can be accomplished by the KBest procedure in python using an F-test.

Below is a plot of the mean squared errors (MSEs) as a function of the number of features that have been selected by the KBest procedure. The MSE’s were calculated from 5-fold cross-validation with the features selected from the training set.

We can obtain some interesting insights from this plot:

- There is a sharp drop in MSE’s after including only the best five features, and the MSE’s decay more slowly thereafter.

- The train and test MSE’s diverge after around 28-30 features included in the model.

- After 28-30 features, the test MSE is horizontal for around 10 additional features.

We therefore consider as our Model II the K=28 best features model. Note that the MSEs do not reach zero at 69 features (these are also the MSE’s for Model I). Both train and test MSEs were higher for Model II than Model I so we opted to prefer Model 1 on the basis of predictive accuracy.

Regularization

Regularization (also known as shrinkage) shrinks a model’s coefficient estimates. This will cause the training error to increase and the test error to decrease, allowing a more generalizable model. In other words, regularization reduces a model’s variance. The two most popular regularization approaches are ridge and lasso. Both perform shrinkage of the model coefficient estimates but lasso may shrink the coefficients to zero, and in effect also perform feature selection to produce a model with less features. There is also another approach called elastic net which combines the effects of ridge and lasso.

As we noted earlier, Model I does not appear to suffer from high variance. We implemented both lasso and ridge regularization and confirmed that this is indeed the case as subsequent resulting train and test MSE’s were similar. Lasso regularization zeroed the coefficients of 14 features. However, we discovered two seemingly undesirable effects with regularization and lasso:

- When performing regularization via either lasso or ridge, the features have to be standardized so that the final fit will not depend on the scale on which they are measured. Recall that there is only one tuning parameter that is used in either lasso or ridge. However, this standardization should be applied to both continuous and dummified features (Tibshirani, 1996). This means that the dummies will lose their categorical interpretations. There appears to be no way around this and we have not found any literature source as yet that provides guidance regarding this issue. For the purpose of prediction, this may not be a problem.

- Lasso shrinks coefficients to zero of subsets of a categorical feature’s subcategories. For example, the categorical feature BldgType has three subcategories: OneFamily, TownHouse, and Duplex. The table below presents the coefficient estimates for Models I and lasso (Duplex is the reference category and not shown). However, only one of the three subcategories of BldgType is zeroed by Lasso. We learned that a way to resolve this issue is to perform what’s called Group Lasso, which zeroes not only subsets of subcategories but all the subcategories in the category.

So, will it be Model I or lasso? If a model with fewer features is desired, then lasso would be the model to choose. Given comparable test MSE’s, the predictive abilities of MLR and lasso models are comparable. Therefore, we would choose Model I: MLR, because, with comparable predictive abilities, it has the very important advantage of having a nice model interpretation.

Reducing Model Bias

There are two immediate strategies to further reduce the bias problem in our model. The first strategy is to reevaluate our pre-processing and feature engineering steps. Predictive power may have been compromised with binning of some continuous features such as Total Basement square feet. However, we anticipate that these adjustments would only reduce bias in small increments as most of the features in the model may have relatively small contributors to the predicted price of a home. Another strategy to reduce bias is to fit a more complex nonlinear model. Ensemble methods such as random forests and boosting may potentially increase accuracy, but at the cost of model interpretability.

Figure Source: http://blog.fastforwardlabs.com/

Linear Regression provides model interpretability and can perform relatively well for accuracy metrics. Non-linear models can be more accurate, but at the cost of interpretability.

Discussion

Total living area and quality of exterior building materials were the top 2 features that determine house price from our model as determined by the KBest approach procedure, as described above. These are features which intuitively make sense and it would be interesting to evaluate whether these features are generalizable over time and housing markets - which we could test with additional data from Ames or elsewhere.

Our engineered features enable nice interpretation. One interesting feature we explored was whether homes sold during peak selling season of May, June, and July. According to our model, selling a home during this time commanded an extra 1.46% in sale price. This raises an interesting question: does this feature provide some actionable information for realtor companies? Would it pay to try to optimize price by timing the sale? It is possible that costs like advertisement space also rise during peak selling season Additional cost-benefit analysis is warrantedto generate actionable next-steps, but our interpretable model could interact with internal realtor data to develop new business strategies.

Nearly one-third of Ames, IA is employed in the education sector. Is there a premium of buying property near Iowa State University - whether it serves as an investment rental property as a landlord or as housing for professors? Alternatively, are housing prices suppressed because of rowdy college parties? Apparently not. Generalizing South Ames as neighborhoods south of Chawq creek, our model suggests that these homes sold for 2.04% higher sale price. This suggests that there is small premium on sale price for campus-adjacent housing.

Conclusion

We spent a large portion of our work on feature engineering and fitting different models according to basic principles and standard approaches. We finally arrived at a simple model that we consider to be reasonable and useful. This model has good interpretability but we learned that its predictive ability can be improved, perhaps only slightly. We could avoid collapsing subcategories as much as we did For example, instead of collapsing five categories into only two, we can try collapsing into three or four. We can also be less aggressive in converting continuous and ordinal features into categorical and further investigate the effect of high leverage data points. Finally, we could investigate a better fitting tree-based model like xgboost. However, we note that fitting more complex nonlinear models may improve predictive ability at the expense of model interpretability.